Smarter feedback

for medical education

An AI-powered clinical assessment platform that gives medical students instant, structured feedback on their SOAP notes — and gives instructors actionable analytics without the grading marathon.

01 — At-a-Glance

The problem,

the solution

Medical students spend hours writing SOAP notes with little to no structured feedback. Instructors are buried under hundreds of submissions with no scalable way to evaluate them consistently. MedScor AI bridges that gap.

Problem

Medical students receive minimal, inconsistent feedback on their clinical documentation skills. Instructors lack the time and tools to provide meaningful assessments at scale — creating a critical gap in clinical training quality.

Solution

MedScor AI uses AWS Bedrock to automatically evaluate SOAP notes against clinical rubrics, delivering instant structured feedback to students and real-time progress analytics to instructors — all within a clean, focused interface.

02 — My Role

Lead Product Designer —

end to end

In 48 hours, I owned the full design process — from understanding the clinical workflow to shipping a production-ready prototype that our team presented to judges.

03 — The Challenge

A broken loop in

clinical education

Medical education relies heavily on SOAP notes — structured clinical documentation that students write to demonstrate diagnostic reasoning. But the feedback loop is broken on both ends.

Students write dozens of SOAP notes per rotation with generic or delayed feedback, making it impossible to course-correct in time. Instructors receive hundreds of submissions and can only review a fraction meaningfully.

How Might We

"How might we give medical students immediate, rubric-based feedback on their SOAP notes — while giving instructors visibility into class-wide performance patterns — without adding to anyone's workload?"

04 — Personas

Two users,

one system

The platform needed to serve fundamentally different mental models — a learner seeking growth signals and an educator seeking accountability data. Both needed clarity, just in different forms.

About

Juggling rotations, studying for Step 2, and writing SOAP notes for every patient encounter. Motivated but often unsure if her documentation is meeting clinical expectations.

Goals

- Understand exactly where her SOAP notes fall short

- Get feedback immediately after submission, not weeks later

- Track improvement across multiple submissions over time

- Build confidence before entering clinical practice

Frustrations

- "I don't know if I'm improving or just repeating the same mistakes"

- Feedback is too vague — "good structure" doesn't help her improve

- Long wait times mean she's moved on to new cases by the time she gets a review

About

Managing patient care alongside teaching responsibilities. Has 24 students this rotation, each submitting 3–5 SOAP notes per week. Cares deeply about student growth but can't give everyone equal attention.

Goals

- Identify which students are struggling before it's too late in the rotation

- See class-wide patterns to adjust teaching emphasis

- Reduce time spent on administrative grading

- Ensure consistent rubric application across all students

Frustrations

- "I have 120 SOAP notes to review this week. I can't give every one real attention."

- No visibility into who's consistently missing the same clinical reasoning step

- Grading is inconsistent — different instructors apply the rubric differently

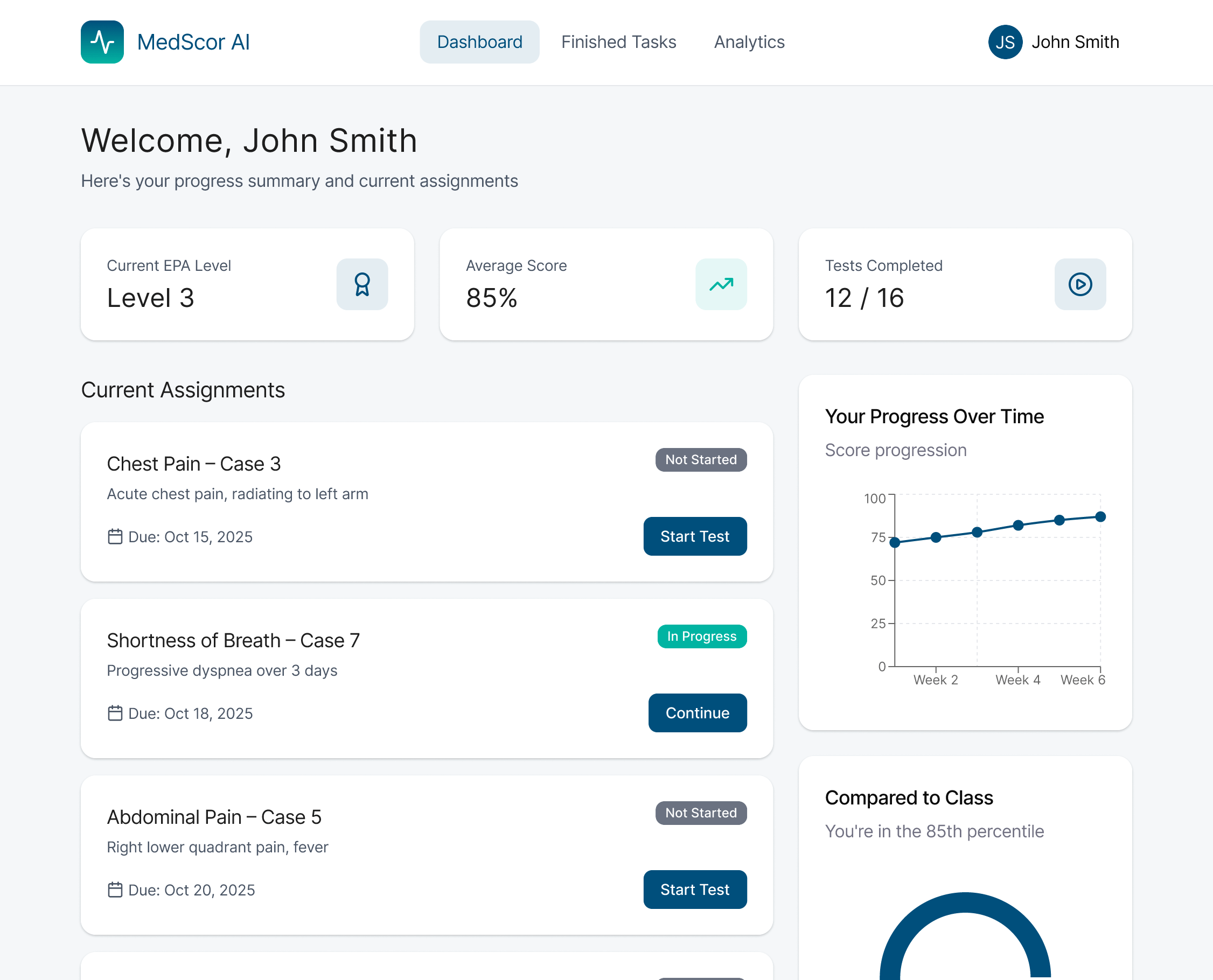

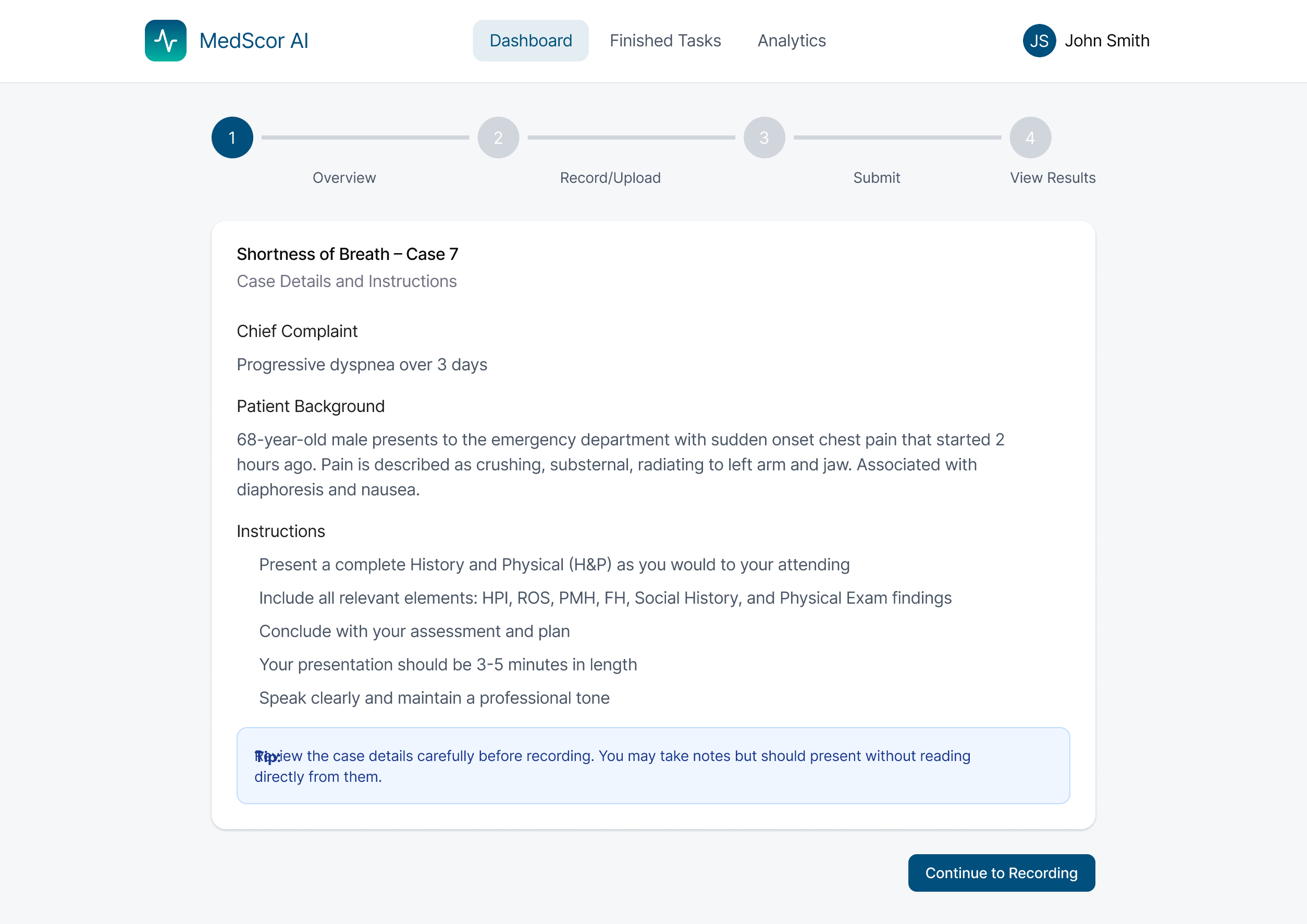

05 — Student Flow

From submission

to clarity

The student experience is built around a single premise: the feedback should feel like a conversation with a knowledgeable mentor, not a grading system. Every step is designed to minimize friction and maximize learning signal.

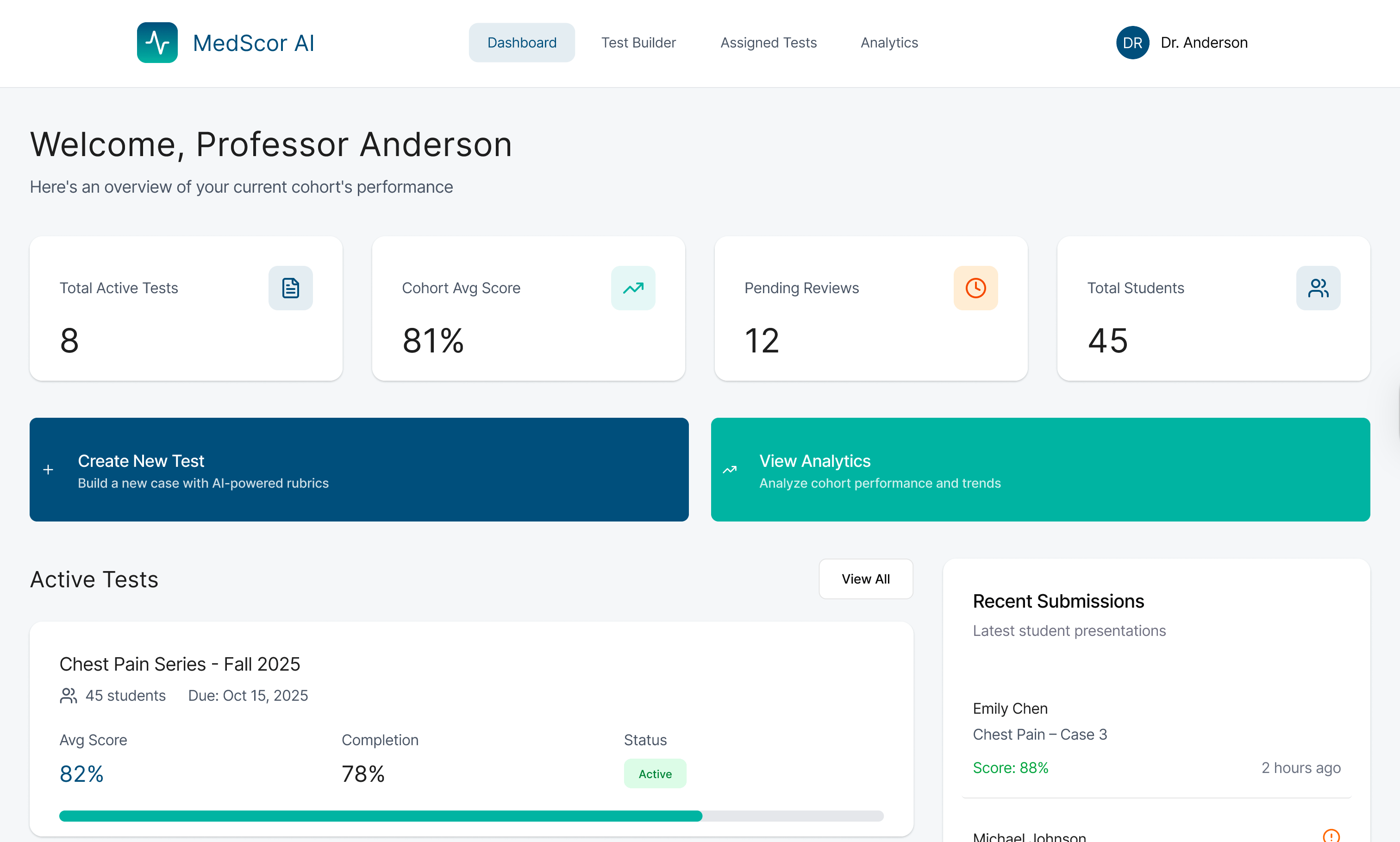

06 — Instructor Flow

Teaching at

scale

The instructor experience is built around aggregation and exception handling. The goal: let the AI handle the volume so the instructor can focus human attention where it has the highest impact.

07 — Visual Language

Clinical precision,

human warmth

Medical tools often feel cold and institutional. MedScor AI needed to feel trustworthy and professional — but also approachable enough that students wouldn't feel judged by it. The design system balances clinical authority with human warmth.

Color System

Typography

Design Principles

Final Screens

Case Review

Instructor Dashboard

08 — Status

What's shipped,

what's next

MedScor AI was built as a hackathon prototype, but the validation we received from judges and clinical educators has opened conversations about taking it further. Here's where things stand.

- Full student SOAP note submission flow (5 screens)

- AI feedback display with rubric breakdown

- Instructor class dashboard with aggregate analytics

- Individual student review interface

- AWS Bedrock integration for note evaluation

- Fully interactive Figma prototype

- Design system with 20+ components

- Real user testing with actual medical students

- Rubric customization by institution and specialty

- Mobile-responsive design for on-ward use

- LMS integration (Canvas, Blackboard)

- Longitudinal tracking across full clinical year

- Accessibility audit (WCAG 2.1 AA)

- Institutional pilot program with Rutgers medical school

09 — Reflection

What I learned

building fast

48 hours forces brutal prioritization. Every design decision had to be justified in seconds, not hours. Here are the three biggest takeaways from this project.

10 — Achievement